AI Charting in Behavioral Health: What Creates Real Value — and What Still Gets in the Way

Why behavioral health providers and private equity sponsors need to evaluate AI documentation tools in the real complexity of care delivery, not just in a polished demo.

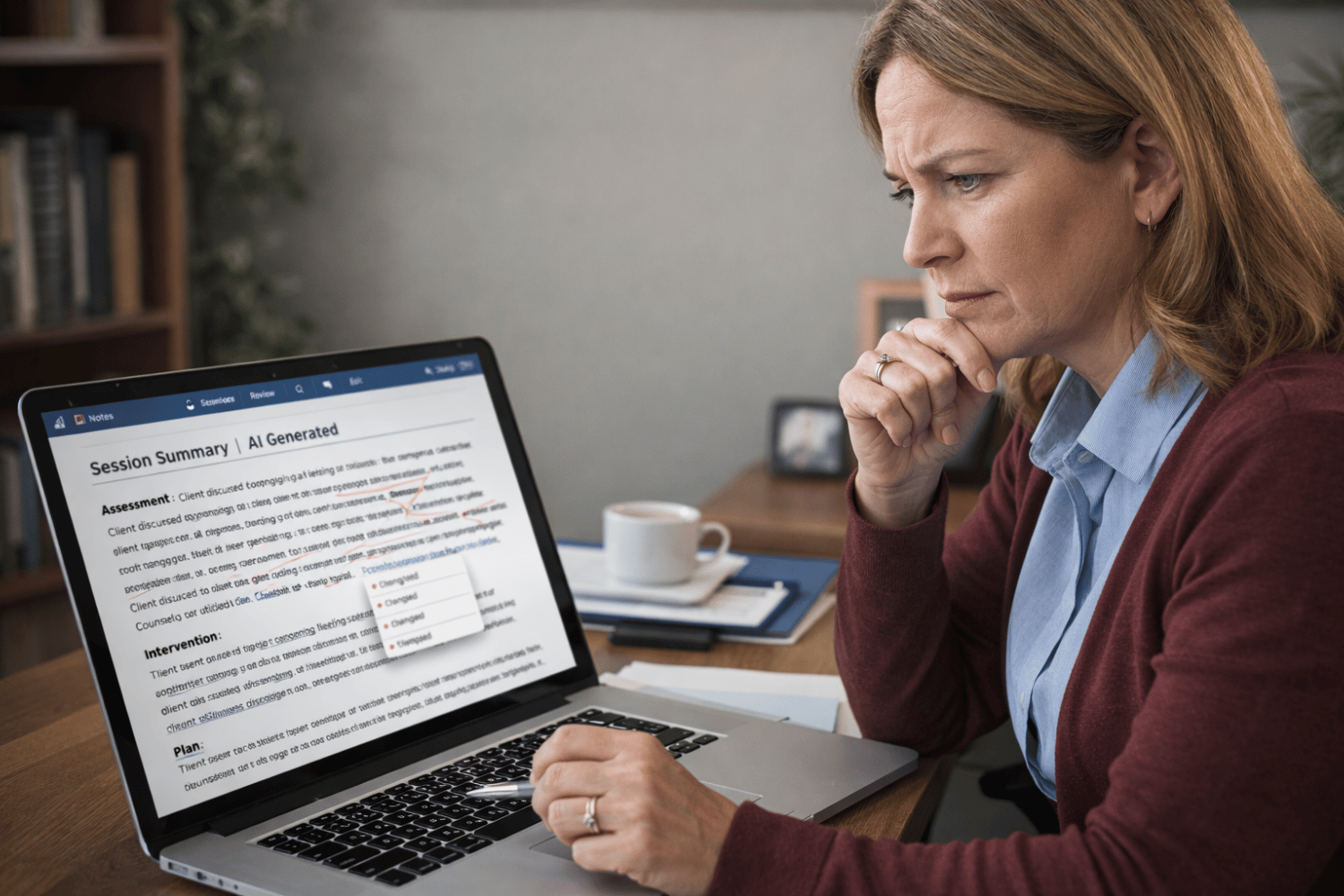

AI charting is getting real attention in behavioral health. The appeal is obvious: reduce documentation time, improve note consistency, ease clinician burden, and create a more scalable operating model.

After evaluating these tools in live behavioral health environments, my view is straightforward. The opportunity is real, but it is narrower and more conditional than many vendors suggest.

The biggest mistake is treating AI charting like a generic productivity tool. In behavioral health, performance depends heavily on the type of encounter, the care setting, the documentation standard, the integration model, and the willingness of clinicians to trust the output. A tool that works well in one scenario may struggle in another, and that gap matters when organizations try to scale adoption across programs.

Key Takeaways

From evaluating AI charting tools across behavioral health environments, several patterns emerged:

- Performance varies significantly depending on the type of clinical encounter

- Clinician trust is a major determinant of adoption

- Pilots require more time and broader testing than vendors often suggest

- EMR integration and PHI handling introduce operational and compliance considerations

- AI charting works best when strong documentation workflows already exist

AI documentation tools can create real value, but only when evaluated in the full complexity of care delivery.

Not All Encounters Are Equal

One of the clearest findings from our evaluations was how much performance varied by interaction type.

Some tools performed reasonably well in straightforward one-on-one, face-to-face sessions. Once the workflow became more complex, the limitations became more visible.

Group therapy introduced challenges around speaker attribution, context, and summarization. Telehealth added variability tied to audio quality, connection stability, and conversational flow. Inpatient and higher-acuity settings created different issues, including shorter exchanges, interruptions, faster pace, and less predictable structure.

Intensive outpatient programs, partial hospitalization programs, intake assessments, family sessions, medication management visits, and crisis encounters all placed different demands on the documentation process.

That variability is often understated in product demos.

Behavioral health documentation is not one uniform workflow. The number of participants, treatment goals, note structure, and required level of nuance can differ significantly from one setting to another.

A tool that supports an individual therapy note may not translate cleanly to group or family sessions. A workflow that feels manageable in telehealth may break down in inpatient or higher-acuity environments.

This is why a narrow pilot can produce a misleading result. If only the cleanest use cases are tested, leadership may come away believing the platform is ready for broader deployment when it is not.

Clinician Resistance Is Part of the Evaluation

We also saw meaningful initial pushback from trained clinicians. That should not be dismissed as simple resistance to change.

In many cases, the concern was justified. Behavioral health clinicians are trained to work with nuance, trust, observation, and judgment. They are understandably cautious about tools that may flatten a complex interaction into something that sounds polished but misses what mattered clinically.

Some questioned whether the note accurately reflected the encounter. Others were concerned that the output sounded generic, overstated certainty, or failed to capture the real clinical significance of what was said.

In group settings especially, clinicians were sensitive to whether the note fairly represented the interaction and whether the technology could distinguish between useful detail and noise.

That reaction is part of the signal.

If clinicians do not trust the output, adoption will lag. If they have to rewrite large portions of the note, the efficiency gain shrinks quickly. If they believe the tool interferes with the patient interaction or creates risk, they will work around it.

Adoption is not something to address later. It is one of the clearest indicators of whether the solution is viable at all.

Pilots Take Longer Than Vendors Suggest

Another practical lesson: pilots took longer than vendors said they would.

A meaningful evaluation requires more than asking whether users like the tool. It takes time to test multiple encounter types, compare note quality across clinicians, assess how much editing is required, and determine whether the workflow holds up outside the vendor’s best-case scenario.

It also takes time for clinicians to become comfortable enough with the process to produce representative feedback.

A short pilot may tell you whether the product is interesting. It usually does not tell you whether it is operationally ready.

We also ran into a recurring integration issue. In many cases, the generated note did not flow directly back into the EMR through a native integration or structured API workflow. Instead, the note had to be copied and pasted into the clinical record.

That was not always a major burden, but it added friction and created another handling point for protected health information outside the core system.

From a technical and compliance standpoint, that matters.

When notes, transcripts, or draft summaries live in a separate application, organizations need to understand where the data resides, how long it is retained, whether it is encrypted in transit and at rest, how access is controlled, and whether audit logs are available.

Even if the external workflow is temporary, it still creates another boundary where PHI must be governed carefully.

Where the Value Is Real

None of this means AI charting lacks value. It means the value is not automatic.

In the right setting, these tools can reduce time spent drafting notes, improve consistency in format and completeness, and lessen some of the after-hours documentation burden that contributes to clinician fatigue.

They may be especially useful where the organization already has clear standards for what a good note should include and where the encounter type is reasonably consistent.

The evaluation process can also surface broader operational issues. In some cases, the larger problem is not the charting tool itself but the absence of standardized documentation expectations, inconsistent supervisory review, or unclear workflow design.

AI can support a stronger process, but it does not create one on its own.

A good tool can improve a good workflow. It usually will not fix a weak one.

What Leaders Should Actually Evaluate

The right questions are more operational than promotional.

Can the tool perform across the organization’s real mix of encounters, including individual therapy, group, telehealth, inpatient, IOP, PHP, intake, family, and medication-related visits?

Is the output clinically sound, not just readable? Would a supervisor or clinical leader be comfortable with it in the chart?

How much editing is required before the note is usable? If review time remains high, the productivity benefit may be marginal.

How does data move between the AI platform and the EMR? Is there a native integration, secure export, or API-based workflow, or does the process still rely on manual copy and paste?

Where are recordings, transcripts, or drafts stored, and what are the retention, access-control, and audit-log capabilities?

Do clinicians believe the tool supports their work without undermining how they practice?

Those are the questions that determine whether the tool creates durable value or simply creates the appearance of modernization.

What Private Equity Should Take from This

For sponsors, AI charting in behavioral health is not just a software decision. It is an operating model decision.

A company may report that it is piloting or using AI documentation tools and still be far from realizing meaningful value.

The real issues are whether the tool works across the care model, whether clinicians adopt it, whether the data-handling model is sound, whether the EMR workflow is practical, and whether management can show measurable improvement in documentation burden and note quality.

This category can sound more mature than it is. In practice, performance varies by setting, implementation takes longer than expected, and the workflow often remains less seamless than the sales narrative implies.

That does not reduce the importance of AI charting.

It increases the importance of evaluating it with discipline.

Assessing technology risk in a healthcare platform?

IT Ally helps private equity sponsors evaluate technology environments across the full investment lifecycle—from diligence through value creation and exit.

Learn more about IT Ally Healthcare and evaluate technology risk across your healthcare portfolio:

https://healthcare.itallyllc.com/